Most executives are asking a simple question:

“Do the major AI chatbots know our brand?”

But in practice, that question hides two different questions:

- Recognition: If we ask the model about us, does it know who we are?

- Activation:When people ask relevant questions, does the model actually bring us up?

If you measure only one, you can make confident decisions for the wrong reason.

- You can feel safe because “AI knows us” while the AI never recommends you.

- Or you can feel excited because “AI mentions us” while the AI doesn’t reliably recognize who you are (which can create confusion and risk).

This article explains a simple, two-score system for AI brand measurement, based on a benchmark of 1,000 entities tested across a 17-model panel.

The short version

In our benchmark:

- Entity Score (how many models know about our entity?) and Question Score (were we named in the AI answer?) move together, but they are not the same thing. The correlation between the two is about 0.65 (strong, but not perfect).

- A big group of brands sit in the “AI knows you but won’t say you” zone:

- 319 out of 1,000 entities were recognized but never mentioned in answers.

- Another group shows up in answers even though the models don’t reliably “recognize” them:

- 28 out of 1,000 entities were not recognized but were still mentioned at least once.

- Many entities are simply not mentioned at all:

- 355 out of 1,000 were never mentioned in either metric.

Bottom line: Recognition is not recommendation. You need both scores.

Why this matters now: AI is becoming the new recommendation layer

Ten years ago, the question was, “Do we rank on Google?”

Now it’s, “Do we show up when someone asks an AI assistant what to buy, who to hire, or what to trust?”

That shift changes what “brand awareness” means.

A brand can be:

- Stored in the model’s memory (recognition), but

- Missing from the model’s suggestions (activation).

If you only track recognition, you may think you’re winning when you’re not. If you only track mentions, you may mistake unstable name-drops for real visibility.

The study (in plain terms)

This was not one chatbot and one prompt.

- 1,000 entities

- 17 chatbots / LLMs tested per entity

- 5,070 total prompts

- 86,190 answers

Entities did not all get the same number of questions (most got 4–5; some got 10). That matters because “how often you are mentioned” depends on how many chances you had to be mentioned.

The two scores (and the only math you really need)

Go to LLMtel.com and run your free report. Here’s how to interpret it:

1) Entity Score = “Do the models recognize us?”

Entity Score measures recognition.

Entity Score = (Number of models that recognize the entity) ÷ 17

Example:

- If 12 of the 17 models recognize your brand:

Entity Score = 12/17 ≈ 0.71 → about 71% recognition across the panel.

What this tells you:

- Whether your brand exists clearly in the models’ knowledge.

What it does not tell you:

- Whether the models will bring you up in answers.

2) Question Score = “Do the models mention us when it makes sense?”

Question Score measures activation.

Each entity was tested with a set of questions. Each question is answered by up to 17 models.

Question Score = (Number of answers that mention the entity) ÷ (Total number of answers)

And the total number of answers is:

Total answers = 17 × (Number of questions asked about that entity)

Example:

- If you ask 5 questions, you get 17 × 5 = 85 total answers.

- If your brand appears in 20 of those answers:

Question Score = 20/85 ≈ 0.235 → about 24% mention rate.

What this tells you:

- How often the model brings you up in the kinds of questions customers actually ask.

What it does not tell you:

- Whether the model truly recognizes you cleanly when asked directly.

The main insight: these two scores are related, but different

Across 1,000 entities, recognition and mention are connected but not identical.

We measured the relationship using correlation:

- Correlation ≈ 0.653

In plain language:

- When Entity Score goes up, Question Score tends to go up too.

- But there are many exceptions and those exceptions are where strategy lives.

A useful way to think about it:

- 0.65 is strong enough to be real

- and weak enough to be dangerous if you assume it’s the whole story

Also important:

- Correlation is not causation.

- But it is a strong signal that “being known” and “being mentioned” are connected just not the same.

The “gap” stories executives should care about

1) AI knows you, but won’t say your name

In our benchmark:

- 319 / 1,000 = 31.9% were recognized by at least one model, but never mentioned in answers.

That’s almost one-third.

This is the most common surprise for leadership teams. It feels like:

“We’re in the model’s head… but not in its mouth.”

In classic marketing terms:

- This is awareness without consideration.

2) AI says you, but may not know you cleanly

Also in our benchmark:

- 28 / 1,000 = 2.8% were not recognized by the models (Entity Score = 0), but were still mentioned in answers at least once.

That can happen for several reasons:

- Name confusion (variants, abbreviations, punctuation),

- Generic words being treated like a brand,

- Models “pattern matching” from similar answers,

- Or straight-up hallucination-like behavior.

This matters because it creates executive risk:

- Wrong details attached to your name,

- Brand confusion,

- Or false claims in customer-facing contexts.

3) The answer mention cliff is real

- 355 / 1,000 = 35.5% were never mentioned in the answers at all.

And the typical entity barely shows up:

- Median mention rate was about 2.35%.

In simple terms:

- For the “middle” brand in the dataset, the AI mentions it only about 2 times out of every 100 answers.

This is why most brands feel invisible in AI because, statistically, they are.

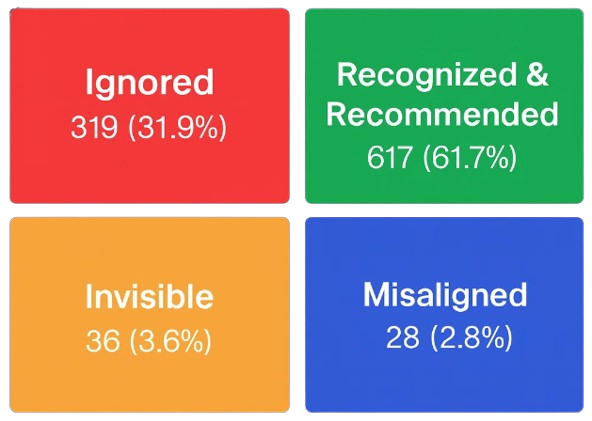

A simple executive framework: the 2×2 map

Plot your brand on two axes:

- Recognition (does AI know who you are?)

- Activation (does AI actually name you in answers?)

From our 1,000-entity study, every brand falls into one of four boxes:

1) Recognized & Recommended – 617 entities (61.7%) – Green box

AI both knows you and brings you up in answers.

- You show up as a relevant option when people ask for recommendations, alternatives, or solutions.

- Executive goal: defend this position, watch for drift across model updates, and track how often you’re recommended versus competitors.

2) Ignored – 319 entities (31.9%) – Red box

AI recognizes your brand but doesn’t mention you.

- The model can explain you if someone asks “What is Brand X?”, but you disappear when they ask “Who should I use?”

- This is the classic latent awareness problem known, but not considered.

- Executive goal: move from Ignored to Recognized & Recommended by tying your brand to real buyer intents and category language.

3) Invisible – 36 entities (3.6%) – Orange box

AI neither recognizes nor names you.

- You are effectively outside the AI market.

- Executive goal: fix basic identity and presence so you at least become Recognized (and then work upward).

4) Misaligned – 28 entities (2.8%) – Blue box

AI names you, but doesn’t reliably recognize or understand you.

- Mentions may come from ambiguity, guesswork, or confusion with similarly named entities.

- This creates brand risk: wrong facts, wrong category, or wrong associations.

- Executive goal: clean up naming, strengthen structured identity, and turn unstable mentions into clear, Aligned recognition

This 2×2 grid gives leadership a far better answer than any single “AI score” ever could:

it tells you how AI treats your brand not just whether it has heard of you.

Why the gap happens (no hype, just reality)

Here are the most common reasons a brand can be recognized but not mentioned:

- Default recommendations

Models tend to suggest “safe defaults” category leaders and widely repeated picks. - Question-intent mismatch

A brand can be well-known, but not aligned with the specific use-cases being asked. - Weak category language

If the web doesn’t strongly connect your brand name to the phrases people use (“best payroll software for…”, “enterprise project management tool…”) the model won’t pull you in. - Name variance

Small changes in your name can split your signal: - Aabbreviations vs full name

- Punctuation

- Subsidiaries vs parent brand

- Domains vs legal name

This is not “SEO trivia.” In AI systems, naming consistency is identity.

What to do (the playbook, by quadrant)

If you’re in Stage 1: Invisible

(Not recognized and never named)

Your job is basic discoverability entering the AI ecosystem.

What to focus on

- Establish a consistent brand identity across all major web surfaces.

- Ensure clear product and category language (avoid jargon or vague positioning).

- Build credible third-party references: news mentions, directories, analyst notes, public profiles.

- Use structured formats (schema.org, LinkedIn, Crunchbase, Wikidata, etc.) to create an anchor for the brand.

What success looks like

Your Entity Score begins to rise you move from Invisible → Ignored, the first step into the funnel.

If you’re in Stage 2: Ignored

(Recognized but never mentioned)

Your job is activation moving from awareness to consideration.

What to focus on

- Own the category phrases people actually type into AI assistants.

- Build clear, citable content and third-party references that tie your brand to:

- “best X for Y”

- comparisons

- alternatives

- use-case language

- Strengthen your presence in public, authoritative sources (not just owned media).

- Make your brand easy to recommend, not just easy to explain.

What success looks like

Your Question Score rises while your Entity Score stays strong moving you from Ignored → Aligned.

If you’re in Stage 3: Misaligned

(Named, but not reliably known)

Your job is stability and clarity reducing confusion and solidifying identity.

What to focus on

- Remove naming ambiguity (variants, abbreviations, plural/singular issues).

- Verify that when AI mentions your brand, it’s actually you, not a look-alike.

- Strengthen canonical identity signals across the web (structured profiles, consistent descriptions, machine-readable data).

- Create clear, factual content that anchors your brand in its correct category, market, and use cases.

What success looks like

Your Entity Score increases so AI both names and recognizes you moving you from Misaligned → Aligned.

If you’re in Stage 4: Aligned

(Recognized & correctly named in answers)

Your job is optimization and progression moving toward consistent recommendation.

What to focus on

- Strengthen the “why choose us” story in high-authority sources.

- Improve clarity around your differentiators and category fit.

- Build deep, trusted signals: case studies, expert reviews, comparisons, benchmarks.

- Expand into new high-intent question clusters your customers care about.

What success looks like

Your Question Score rises consistently, moving you from Aligned → Trusted.

Bonus: Stage 5: Trusted

Recognized & Recommended in the majority of cases)

Your job is defense keeping your position while competitors try to earn theirs.

What to focus on

- Monitor drift across model updates and new AI releases.

- Track category shifts who else is being recommended alongside you?

- Ensure that new products, regions, or offerings have accurate coverage in authoritative sources.

- Expand your presence into adjacent categories so AI sees you as a safe default across more contexts.

What success looks like

You maintain (or grow) the rate at which AI systems recommend you staying at the Trusted stage.

The dashboard I’d put in a board deck

Keep it simple. Track these quarterly:

- Entity Score (recognition across the 17-model panel)

- Question Score (share of answers where you are mentioned)

- Your quadrant (Recognized+Activated vs Recognized-but-Ignored, etc.)

- Name variance flag (are mentions split across multiple variants?)

That’s enough to guide strategy without drowning in metrics.

Closing: ask the right question

Instead of asking:

“Does AI know us?”

Ask two questions:

- Does AI recognize us when asked? (Entity Score)

- Does AI bring us up when it should? (Question Score)

Because in AI-driven markets, being known is not the same as being chosen. And one score will never tell you the difference.